For the past few months, I’ve been feeling a bit stuck in my job search.

I’m a Lead Developer/Engineering Manager with a background in Unity3D/Unreal Engine and supporting systems like authentication, cloud storage, release pipelines, and GCP. I’ve always enjoyed solving a wide range of problems, but with so much shifting in the tech world, especially with AI and automation, I wasn’t sure where to steer next.

Do I go deeper into game dev? Keep leaning into web apps? Try to break into AI?

Everything felt exciting, but also extremely overwhelming.

Following Curiosity Instead of a Job Description

Instead of spinning my wheels, I decided to build something. No pressure, no resume goal. I just want to make something I’d want to use myself. Worst case, I get to learn some new stuff along the way.

Could I build an AI assistant that answers city-specific questions using real conversations and local knowledge?

I started there. Something simple, something helpful. A tool I’d genuinely use as a digital nomad, and a clear example of turning ambiguous AI ideas into shipped value.

What I Ended Up Building

By the end of the day, I had the foundation for a full-stack, AI-integrated knowledge assistant. Here's a quick overview of the stack and workflows:

- OpenAI embeddings for semantic chunking and search

- Supabase + pgvector for vector similarity search

- Next.js (App Router) with Edge Functions for secure APIs

- PostgreSQL functions and RLS policies to protect context-based data

- A design that supports turning helpful user responses into persisted, AI-augmented knowledge

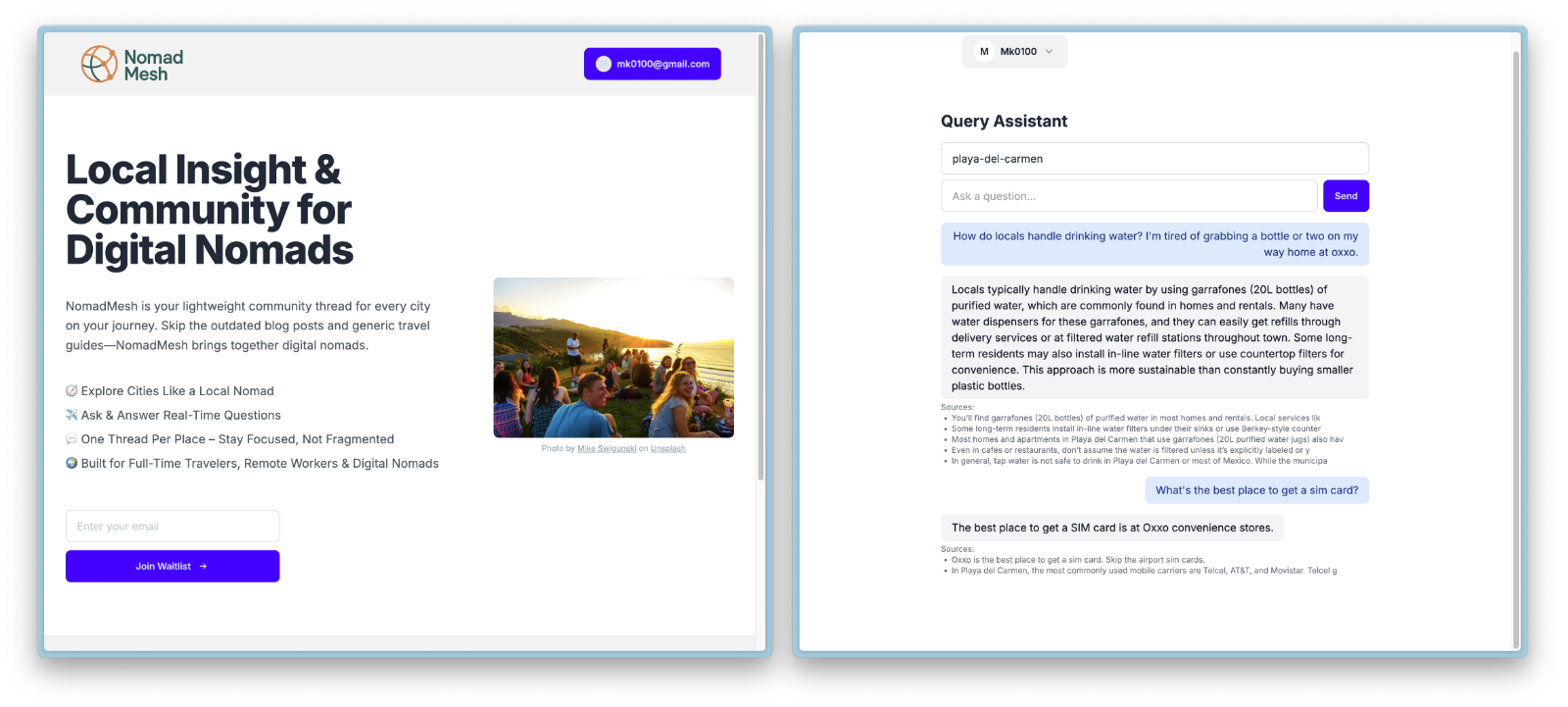

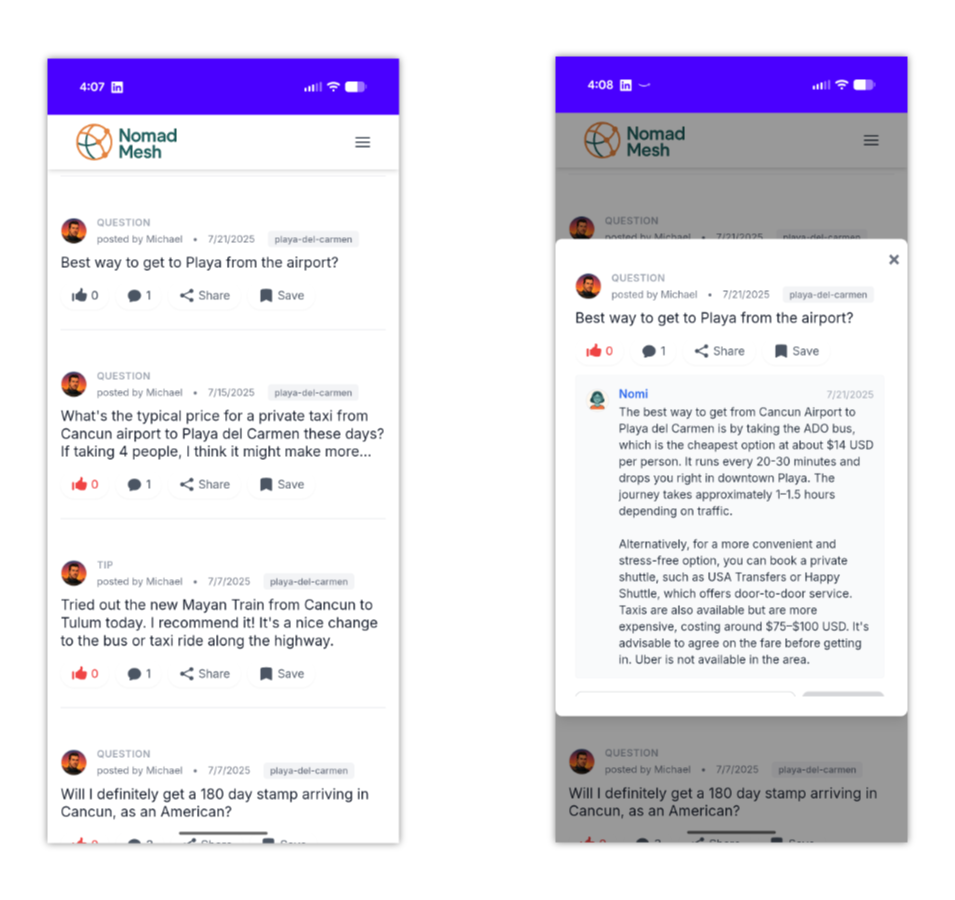

I called it NomadMesh. A super early version of a smarter way to share and discover useful location-specific information.

What Shipping Looked Like

My product-owner brain kicked in immediately. Before writing much code, I wrote down the guardrails that would make NomadMesh useful to an actual traveler community:

- Defined a pilot persona + success metric: digital nomads planning a new city each quarter; success = a relevant answer in less than 3 seconds using at least one community-sourced citation.

- Scoped a one-day MVP roadmap: ingestion, RAG pipeline, and a thin chat UI—everything else dropped into a “later” column so I could show progress fast.

- Instrumented latency + cost budgets: simple tracing around embeddings, vector search, and completion calls so I could explain tradeoffs to stakeholders (or future teammates) instead of hand-waving.

- Documented a growth path: outlined how adding contributor roles, trust signals, and revenue hooks would evolve the product from prototype to community platform.

Rediscovering RAG (for Real This Time)

Funny enough, this wasn’t my first time using Retrieval-Augmented Generation (RAG)... I just didn’t know the name for it back then.

In a past project, I had built a system that maintained a structured knowledge base and fed relevant entries into GPT to guide its responses. It worked well for narrowing down options and making the AI feel more context-aware, even if I didn’t fully understand the underlying pattern at the time.

This time around, I did know what I was doing.

After reading more about RAG, I wanted to implement a proper version using a vectorized database. With OpenAI embeddings and pgvector, I could pull in the most relevant, community-verified content before generating a response. That gave the assistant a grounded, trustworthy feel, more like a local expert remixing shared experience than a model guessing in the dark.

RAG became the backbone of turning scattered posts and guides into something persistent and usable, a living knowledge base that actually gets smarter the more people contribute.

A Quick Technical Deep Dive

To keep hallucinations low, I split the pipeline into a few discrete control points:

- Chunk + classify: every post is chunked into ~200-word slices, tagged by city, and annotated with a sentiment score to deprioritize rants.

- Policy-aware retrieval: pgvector searches run through Postgres functions that enforce RLS, so users only pull context for the cities they’re allowed to see.

- Prompt budgeter: I wrote a tiny utility that right-sizes the prompt window to stay within latency/cost targets while still giving the model enough nuance.

- Answer critiques: completions are pushed through a lightweight verifier that flags responses without citations so I can review and harden prompts later.

It’s not production-grade yet, but it shows how I pair system design with product guardrails when I own the outcome end to end.

How It Worked (and What I Noticed)

The idea was pretty simple: take community posts and city guides, break them into bite-sized chunks, and store them in a vectorized database. When someone asks a question, I first run a similarity search to retrieve the most relevant knowledge base entries. Then, I pass that context to OpenAI to help craft a helpful, grounded response. But a few things surprised me along the way:

- Breaking stuff up is everything. Big blocks of text didn’t play nice with vector search. Once I started splitting content into smaller, more focused pieces (and tagging them by city), the results got way better.

- The AI needs guardrails. Plugging GPT straight into search wasn’t enough. It would start making things up. But once I fed it real, community-verified chunks as context, the answers felt grounded and useful.

- Less is more (sometimes). Giving the model too much context made responses weirdly generic, but too little made them lose nuance. Tuning that balance feels like an art.

- Fast responses feel magical. Supabase Edge Functions + Next.js API routes kept the experience snappy, even while doing embeddings and search in real time.

In the end, it started to feel like the assistant was actually tapping into a living knowledge base, not just spitting out static answers. That’s when I knew I was onto something.

Why It Helped More Than Just My Skills

This wasn’t just about learning a stack. It helped me reflect on what I actually want to spend my time on:

- Solving real, human problems with thoughtful interfaces

- Building tools where AI makes community input more usable, not noisier

- Writing code that brings product ideas to life end-to-end

It also reminded me that I enjoy building things that feel useful from day one.

Product Ownership Takeaways

- Translate ambiguity into roadmaps. I turned “maybe an AI travel thing?” into a backlog, stack, and measurable success criteria within a single day.

- Earn trust with clarity. Documenting the data model, RLS rules, and prompt budgets means someone else could pick this up and understand how it works without me in the room.

- Balance craft + communication. I paired the engineering spike with a quick walkthrough for a couple product friends, then folded their feedback into the next sprint plan.

Where I’m Heading Next

This project gave me a much needed boost of momentum and clarity. It also confirmed a few things about what I want from my next role:

- A team working on products people use and enjoy/rely on

- A space where AI + interfaces intersect in meaningful, practical ways

- A role that values both building and thinking, not just task execution

As for NomadMesh, I plan to keep iterating. My goal is to evolve the interface into something that seamlessly blends a group post/comment model with an AI-digested knowledge base. The vision is to create a platform where community discussions don’t just disappear, but are distilled into persistent, usable insights that keep getting smarter over time.

If I take it far enough, I hope NomadMesh will earn a spot on my projects portfolio page as a polished, real-world example of what’s possible at the intersection of community, AI, and thoughtful product design.

Curious how I’m approaching this or want to jam on responsible AI product bets? Ping me. I’m always up for talking shop.

// Mike